Algorithms

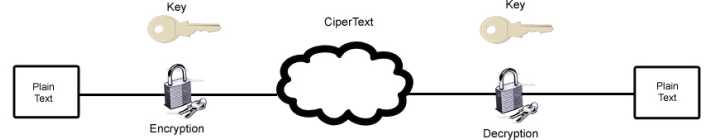

Every current encryption scheme is based upon an algorithm, a step-by-step, recursive computational procedure for solving a problem in a finite number of steps. The cryptographic algorithm-what is commonly called the encryption algorithm or cipher- is made up of mathematical steps for encrypting and decrypting information. Figure 2.3 shows a diagram of the encryption and decryption process and its parts.

The best algorithms are always public algorithms that have been published for peerreview by other cryptographic and mathematical experts. Publication is important, as any flaws in the system can be revealed by others before actual use of the system. Several proprietary algorithms have been reverse-engineered, exposing the confidential data the algorithms try to protect. Examples of this include the decryption of Nikon's proprietary RAW format white balance encryption, and the cracking of the Exxon Mobil SpeedPass RFID encryption. The use of a proprietary system can actually be less secure than using a published system. While proprietary systems are not made available to be tested by potential crackers, public systems are made public for precisely this purpose.

A system that maintains its security after public testing

can be reasonably trusted to be secure. A public algorithm can be more secure because good systems rely on the encryption key to provide security, not the algorithm itself. The actual steps for encrypting data can be published, because without the key, the protected information cannot be accessed. A key is a special piece of data used in both the encryption and decryption processes. The algorithms stay the same in every implementation, but a different key is used for each, which ensures that even if someone knows the algorithm you use to protect your data, he cannot break your security. A classic example of this is the early shift cipher, known as Caesar's cipher.

Hashing

Hashing functions are commonly used encryption methods. A hashing function is a special mathematical function that performs one-way encryption, which means that once the algorithm is processed, there is no feasible way to use the ciphertext to retrieve the plaintext that was used to generate it. Also, ideally, there is no feasible way to generate two different plaintexts that compute to the same hash value. Figure 3.2 shows a generic hashing process.

Common uses of hashing functions are storing computer passwords and ensuring message integrity. The idea is that hashing can produce a unique value that corresponds to the data entered, but the hash value is also reproducible by anyone else running the developed in 1993, was designed as the algorithm to be used for secure hashing in the U.S. Digital Signature Standard (DSS). It is modeled on the MD4 algorithm and implements fixes in that algorithm discovered by the NSA. It creates message digests 160 bits long that can be used by the Digital Signature Algorithm (DSA), which can then compute the signature of the message. This is computationally simpler, as the message digest is typically much smaller than the actual message-smaller message, less work.

SHA-1 works, as do all hashing functions, by applying a compression function to the data input. It accepts an input of up to 264 bits or less and then compresses down to a hash of 160 bits. SHA-1 works in block mode, separating the data into words first, and then grouping the words into blocks. The words are 32-bit strings converted to hex; grouped together as 16 words, they make up a 512-bit block. If the data that is input to SHA-1 is not a multiple of 512, the message is padded with zeros and an integer describing the original length of the message.

At one time, SHA-1 was one of the more secure hash functions, but it has been found vulnerable to a collision attack. Thus, most people are suggesting that implementations of SHA-1 be moved to one of the other SHA versions. These longer versions, SHA-256, SHA-384, and SHA-512, all have longer hash results, making them more difficult to attack successfully. The added security and resistance to attack in SHA-1 does require more processing power to compute the hash.

SHA-256

SHA-256 is similar to SHA-1, in that it will also accept input of less than 264 bits and reduces that input to a hash. This algorithm reduces to 256 bits instead of SHA-1's 160. Defined in FIPS 180-2 in 2002, SHA-256 is listed as an update to the original FIPS 180 that defined SHA. Similar to SHA-1, SHA-256 will accept 264 bits of input and uses 32-bit words and 512-bit blocks. Padding is added until the entire message is a multiple of 512. SHA-256 uses sixty-four 32-bit words, eight working variables, and results in a hash value of eight 32-bit words, hence 256 bits. SHA-256 is more secure than SHA-1, but the

attack basis for SHA-1 can produce collisions in SHA-256 as well since they are similar algorithms. The SHA standard does have two longer versions, however.

SHA-384

SHA-384 is also similar to SHA-1, but it handles larger sets of data. SHA-384 will accept 2128 bits of input, which it pads until it has several blocks of data at 1024-bit blocks. SHA-384 also used 64-bit words instead of SHA-1's 32-bit words. It uses six 64-bit words to produce the 284-bit hash value.

SHA-512

SHA-512 is structurally similar to SHA-384. It will accept the same 2128 input and uses the same 64-bit word size and 1024-bit block size. SHA-512 does differ from SHA-384 in that it uses eight 64-bit words for the final hash, resulting in 512 bits.

Message Digest

Message Digest (MD) is the generic version of one of several algorithms that are designed to create a message digest or hash from data input into the algorithm. MD algorithms work in the same manner as SHA in that they use a secure method to compress the file and generate a computed output of a specified number of bits. They were all developed by Ronald L. Rivest of MIT.

MD2

MD2 was developed in 1989 and is in some ways an early version of the later MD5 algorithm. It takes a data input of any length and produces a hash output of 128 bits. It is different from MD4 and MD5 in that MD2 is optimized for 8-bit machines, whereas the other two are optimized for 32 bit machines. As with SHA, the input data is padded to become a multiple-in this case a multiple of 16 bytes. After padding, a 16-byte checksum is appended to the message. The message is then processed in 16-byte blocks.

MD4

MD4 was developed in 1990 and is optimized for 32-bit computers. It is a fast algorithm, but it can be subject to more attacks than more secure algorithms like MD5. Like MD2, it takes a data input of some length and outputs a digest of 128 bits. The message is padded to become a multiple of 512, which is then concatenated with the representation of the message's original length.

As with SHA, the message is then divided into blocks and also into 16 words of 32 bits. All blocks of the message are processed in three distinct rounds. The digest is then computed using a four-word buffer. The final four words remaining after compression are the 128-bit hash.

An extended version of MD4 computes the message in parallel and produces two 128-bit outputs-effectively a 256-bit hash. Even though a longer hash is produced, security has not been improved because of basic flaws in the algorithm. Cryptographer Hans Dobbertin has shown how collisions in MD4 can be found in under a minute using just a PC. This vulnerability to collisions applies to 128-bit MD4 as well as 256-bit MD4. Most people are moving away from MD4 to MD5 or a robust version of SHA.

MD5

MD5 was developed in 1991 and is structured after MD4 but with additional security to overcome the problems in MD4. Therefore, it is very similar to the MD4 algorithm, only slightly slower and more secure. MD5 creates a 128-bit hash of a message of any length. Like MD4, it segments the message into 512-bit blocks and then into sixteen 32-bit words. First, the original message is padded to be 64 bits short of a multiple of 512 bits. Then a 64-bit representation of the original length of the message is added to the padded value to bring the entire message up to a 512-bit multiple.