Security Basics

Computer security is a term that has many meanings and related terms. Computer security entails the methods used to ensure that a system is secure. The ability to control who has access to a computer system and data and what they can do with those resources must be addressed in broad terms of computer security.

Seldom in today's world are computers not connected to other computers in networks. This then introduces the term network security to refer to the protection of the multiple computers and other devices that are connected together in a network. Related to these two terms are two others, information security and information assurance, which place the focus of the security process not on the hardware and software being used but on the data that is processed by them. Assurance also introduces another concept, that of the availability of the systems and information when users want them.

Since the late 1990s, much has been published about specific lapses in security that has resulted in the penetration of a computer network or in denying access to or the use of the network. Over the last few years, the general public has become increasingly aware of its

Leading the way in IT testing and certification tools, www.testking.com

dependence on computers and networks and consequently has also become interested in their security.

As a result of this increased attention by the public, several new terms have become commonplace in conversations and print. Terms such as hacking, virus, TCP/IP, encryption, and firewalls now frequently appear in mainstream news publications and have found their way into casual conversations. What was once the purview of scientists and engineers is now part of our everyday life.

With our increased daily dependence on computers and networks to conduct everything from making purchases at our local grocery store to driving our children to school (any new car these days probably uses a small computer to obtain peak engine performance), ensuring that computers and networks are secure has become of paramount importance. Medical information about each of us is probably stored in a computer somewhere. So is financial information and data relating to the types of purchases we make and store preferences (assuming we have and use a credit card to make purchases).

Making sure that this information remains private is a growing concern to the general public, and it is one of the jobs of security to help with the protection of our privacy. Simply stated, computer and network security is essential for us to function effectively and safely in today's highly automated environment.

The "CIA" of Security

Almost from its inception, the goals of computer security have been threefold: confidentiality, integrity, and availability - the "CIA" of security. Confidentiality ensures that only those individuals who have the authority to view a piece of information may do so. No unauthorized individual should ever be able to view data to which they are not entitled. Integrity is a related concept but deals with the modification of data. Only authorized individuals should be able to change or delete information. The goal of availability is to ensure that the data, or the system itself, is available for use when the authorized user wants it.

As a result of the increased use of networks for commerce, two additional security goals have been added to the original three in the CIA of security. Authentication deals with ensuring that an individual is who he claims to be. The need for authentication in an online banking transaction, for example, is obvious. Related to this is nonrepudiation, which deals with the ability to verify that a message has been sent and received so that the sender (or receiver) cannot refute sending (or receiving) the information.

The Operational Model of Security

For many years, the focus of security was on prevention.

If you could prevent somebody from gaining access to your computer systems and networks, you assumed that they were secure. Protection was thus equated with prevention. While this basic premise was true, it failed to

Leading the way in IT testing and certification tools,

acknowledge the realities of the networked environment of which our systems are a part. No matter how well you think you can provide prevention, somebody always seems to find a way around the safeguards. When this happens, the system is left unprotected. What is needed is multiple prevention techniques and also technology to alert you when prevention has failed and to provide ways to address the problem.

This results in a modification to the original security equation with the addition of two new elements - detection and response. The security equation thus becomes Protection = Prevention + (Detection + Response). This is known as the operational model of computer security. Every security technique and technology falls into at least one of the three elements of the equation.

Security Basics

An organization can choose to address the protection of its networks in three ways: ignore security issues, provide host security, and approach security at a network level. The last two, host and network security, have prevention as well as detection and response components.

If an organization decides to ignore security, it has chosen to utilize the minimal amount of security that is provided with its workstations, servers, and devices. No additional security measures will be implemented. Each "out-of-the-box" system has certain security settings that can be configured, and they should be. To protect an entire network, however, requires work in addition to the few protection mechanisms that come with systems by default.

Host Security

Host security takes a granular view of security by focusing on protecting each computer and device individually instead of addressing protection of the network as a whole. When host security is implemented, each computer is expected to protect itself. If an organization decides to implement only host security and does not include network security, it will likely introduce or overlook vulnerabilities. Many environments involve different operating systems (Windows, UNIX, Linux, and Macintosh), different versions of those operating systems, and different types of installed applications. Each operating system has security configurations that differ from other systems, and different versions of the same operating system can in fact have variations among them. Trying to ensure that every computer is "locked down" to the same degree as every other system in the environment can be overwhelming and often results in an unsuccessful and frustrating effort.

Network Security

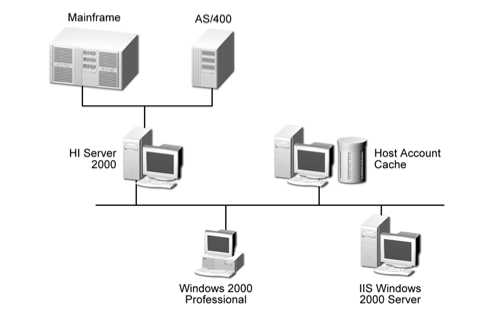

In some smaller environments, host security alone might be a viable option, but as systems become connected into networks, security should include the actual network itself. In network security, an emphasis is placed on controlling access to internal computers from external entities. This control can be through devices such as routers, firewalls, authentication hardware and software, encryption, and intrusion detection systems (IDSs).

Least Privilege

One of the most fundamental approaches to security is least privilege. This concept is applicable to many physical environments as well as network and host security. Least privilege means that an object (such as a user, application, or process) should have only the rights and privileges necessary to perform its task, with no additional permissions. Limiting an object's privileges limits the amount of harm that can be caused, thus limiting an organization's exposure to damage. Users may have access to the files on their workstations and a select set of files on a file server, but they have no access to critical data that is held within the database. This rule helps an organization protect its most sensitive resources and helps ensure that whoever is interacting with these resources has a valid reason to do so.

The concept of least privilege applies to more network security issues than just providing users with specific rights and permissions. When trust relationships are created, they should not be implemented in such a way that everyone trusts each other simply because it is easier to set it up that way. One domain should trust another for very specific reasons, and the implementers should have a full understanding of what the trust relationship allows between two domains. If one domain trusts another, do all of the users automatically become trusted, and can they thus easily access any and all resources on the other domain? Is this a good idea? Can a more secure method provide the same functionality? If a trusted relationship is implemented such that users in one group can access a plotter or printer that is available on only one domain, for example, it might make sense to purchase another plotter so that other more valuable or sensitive resources are not accessible by the entire group.

Separation of Duties

Another fundamental approach to security is separation of duties. This concept is applicable to physical environments as well as network and host security. Separation of duty ensures that for any given task, more than one individual needs to be involved. The task is broken into different duties, each of which is accomplished by a separate individual. By implementing a task in this manner, no single individual can abuse the system for his or her own gain. This principle has been implemented in the business world, especially financial institutions, for many years. A simple example is a system in which one individual is required to place an order and a separate person is needed to authorize the purchase.

While separation of duties provides a certain level of checks and balances, it is not without its own drawbacks. Chief among these is the cost required to accomplish the task. This cost is manifested in both time and money. More than one individual is required when a single person could accomplish the task, thus potentially increasing the cost of the task. In addition, with more than one individual involved, a certain delay can be expected as the task must proceed through its various steps.

Implicit Deny

What has become the Internet was originally designed as a friendly environment where everybody agreed to abide by the rules implemented in the various protocols. Today, the Internet is no longer the friendly playground of researchers that it once was. This has resulted in different approaches that might at first seem less than friendly but that are required for security purposes. One of these approaches is implicit deny. Frequently in the network world, decisions concerning access must be made. Often a series of rules will be used to determine whether or not to allow access. If a particular situation is not covered by any of the other rules, the implicit deny approach states that access should not be granted. In other words, if no rule would allow access, then access should not be granted. Implicit deny applies to situations involving both authorization and access.

Job Rotation

An interesting approach to enhance security that is gaining increasing attention is through job rotation. The benefits of rotating individuals through various jobs in an organization's IT department have been discussed for a while. By rotating through jobs, individuals gain a better perspective of how the various parts of IT can enhance (or hinder) the business. Since security is often a misunderstood aspect of IT, rotating individuals through security positions can result in a much wider understanding of the security problems throughout the organization. It also can have the side benefit of not relying on any one individual too heavily for security expertise. When all security tasks are the domain of one employee, and if that individual were to leave suddenly, security at the organization could suffer. On the other hand, if security tasks were understood by many different individuals, the loss of any one individual would have less of an impact on the organization.

One significant drawback to job rotation is relying on it too heavily. The IT world is very technical and often expertise in any single aspect takes years to develop. This is especially true in the security environment. In addition, the rapidly changing threat environment with new vulnerabilities and exploits routinely being discovered requires a level of understanding that takes considerable time to acquire and maintain.

Layered Security

A bank does not protect the money that it stores Щ

Network

only by placing it in a vault. It uses one or more security guards as a first defense to watch for suspicious activities and to secure the facility when the bank is closed. It probably uses monitoring systems to watch various activities that take place in

Leading the way in IT testing and certification U

Applications

Data & Resources

the bank, whether involving customers or employees. The vault is usually located in the center of the facility, and layers of rooms or walls also protect access to the vault. Access control ensures that the people who want to enter the vault have been granted the appropriate authorization before they are allowed access, and the systems, including manual switches, are connected directly to the police station in case a determined bank robber successfully penetrates any one of these layers of protection.

Networks should utilize the same type of layered security architecture. No system is 100 percent secure and nothing is foolproof, so no single specific protection mechanism should ever be trusted alone. Every piece of software and every device can be compromised in some way, and every encryption algorithm can be broken by someone with enough time and resources. The goal of security is to make the effort of actually accomplishing a compromise more costly in time and effort than it is worth to a potential attacker.

Consider, for example, the steps an intruder has to take to access critical data held within a company's back-end database. The intruder will first need to penetrate the firewall and use packets and methods that will not be identified and detected by the IDS. The attacker will have to circumvent an internal router performing packet filtering and possibly penetrate another firewall that is used to separate one internal network from another.

From here, the intruder must break the access controls on the database, which means performing a dictionary or brute force attack to be able to authenticate to the database software. Once the intruder has gotten this far, he still needs to locate the data within the database. This can in turn be complicated by the use of access control lists (ACLs) outlining who can actually view or modify the data. That's a lot of work.

Diversity of Defense

Diversity of defense is a concept that complements the idea of various layers of security; layers are made dissimilar so that even if an attacker knows how to get through a system making up one layer, she might not know how to get through a different type of layer that employs a different system for security.

If, for example, an environment has two firewalls that form a demilitarized zone (a DMZ is the area between the two firewalls that provides an environment where activities can be more closely monitored), one firewall can be placed at the perimeter of the Internet and the DMZ. This firewall will analyze traffic that passes through that specific access point and enforces certain types of restrictions. The other firewall can be placed between the DMZ and the internal network. When applying the diversity of defense concept, you should set up these two firewalls to filter for different types of traffic and provide different types of restrictions. The first firewall, for example, can make sure that no File Transfer Protocol (FTP), Simple Network Management Protocol (SNMP), or Telnet traffic enters the network, but allow Simple Mail Transfer Protocol (SMTP), Secure Shell (SSH), Hypertext Transfer Protocol (HTTP), and SSL traffic through. The second firewall may not allow SSL or SSH through and can interrogate SMTP and HTTP traffic to make sure that certain types of attacks are not part of that traffic.

Another type of diversity of defense is to use products from different vendors. Every product has its own security vulnerabilities that are usually known to experienced attackers in the community. A Check Point firewall, for example, has different security issues and settings than a Sidewinder firewall; thus, different exploits can be used to crash or compromise them in some fashion. Combining this type of diversity with the preceding example, you might use the Check Point firewall as the first line of defense. If attackers are able to penetrate it, they are less likely to get through the next firewall if it is a Cisco PIX or Sidewinder firewall (or another maker's firewall).

Security Through Obscurity

With security through obscurity, security is considered effective if the environment and protection mechanisms are confusing or supposedly not generally known. Security through obscurity uses the approach of protecting something by hiding it-out of sight, out of mind. Non-computer examples of this concept include hiding your briefcase or purse if you leave it in the car so that it is not in plain view, hiding a house key under a ceramic frog on your porch, or pushing your favorite ice cream to the back of the freezer so that nobody else will see it. This approach, however, does not provide actual protection of the object. Someone can still steal the purse by breaking into the car, lift the ceramic frog and find the key, or dig through the items in the freezer to find the ice cream. Security through obscurity may make someone work a little harder to accomplish a task, but it does not prevent anyone from eventually succeeding.

Similar approaches occur in computer and network security when attempting to hide certain objects. A network administrator can, for instance, move a service from its default port to a different port so that others will not know how to access it as easily, or a firewall can be configured to hide specific information about the internal network in the hope that potential attackers will not obtain the information for use in an attack on the network.

Keep It Simple

The terms security and complexity are often at odds with each other, because the more complex something is, the more difficult it is to understand, and you cannot truly secure something if you do not understand it. Another reason complexity is a problem within security is that it usually allows too many opportunities for something to go wrong. An application with 4000 lines of code has far fewer places for buffer overflows, for example, than an application with 2 million lines of code.

As with any other type of technology, when something goes wrong with security mechanisms, a troubleshooting process is used to identify the problem. If the mechanism is overly complex, identifying the root of the problem can be overwhelming if not impossible. Security is already a very complex issue because many variables are involved, many types of attacks and vulnerabilities are possible, many different types of resources must be secure, and many different ways can be used to secure them. You want your security processes and tools to be as simple and elegant as possible. They should be simple to troubleshoot, simple to use, and simple to administer.